Answer:

HDR, or High Dynamic Range, is a video signal containing metadata that allows the TV to display a broader range of colors with higher brightness and contrast for HDR-compatible content.

Specifications that play a significant role in HDR are the TV’s brightness, contrast ratio, color gamut, and resolution. The most common HDR formats are HDR10, HDR10+ and Dolby Vision.

Almost all new TVs support HDR, so if you’re buying a TV, you should definitely get one with HDR. However, just how good HDR image quality will be on your TV will depend on its panel type, peak brightness, contrast ratio and color gamut.

In comparison to 1080p, a 4K TV has four times the pixel count, which dramatically improves the image quality in terms of detail clarity and immersion, but what about color quality and contrast ratio?

If you want to take another step forward when buying a new TV and get an even more vibrant image quality, you will need HDR (High Dynamic Range).

In short, HDR feeds its metadata to the TV, which allows it to display more lifelike and distinct colors. However, just having an HDR-capable TV isn’t enough, there are plenty of additional things to consider.

First of all, HDR only works for content that was made for HDR, be it video games, HDR Blu-rays, or your favorite TV shows on Netflix, Amazon Prime Video, etc. So, you need to make sure you’ll have something worthy to watch on an HDR TV.

Secondly, not all HDR TVs are the same. HDR won’t be able to improve the picture by much if the TV has a poor contrast ratio, low brightness and a narrow color gamut.

Lastly, there are several different HDR formats, including HDR10, HDR10+, Dolby Vision, HLG, Advanced HDR, etc. In this article, we’ll be focusing on the two most popular and widespread formats, which are HDR10 and Dolby Vision.

HDR vs. Non-HDR

To truly see how HDR affects the picture quality, you would need an HDR-capable display.

This also means that all of the images online that try to depict HDR vs. non-HDR content are simply emulated to illustrate the point.

However, the emulated pictures are not far off. HDR extends the color gamut (and properly maps it), contrast, and brightness, which significantly improves details in the highlights and shadows of the image.

Most importantly, proper HDR allows you to see the video game or movie the way its creators intended.

Perhaps the easiest way to explain the difference between SDR and HDR is through brightness performance. Let’s say you have an SDR display with an LED panel and no local dimming, with a maximum brightness of 250 cd/m² (or nits, unit for measuring luminance).

If you crank up the brightness on your display to 100/100, the Moon in the image below will have 250-nits (for example), while the black of the background will be around 0.25-nits on an IPS panel (1000:1 contrast ratio) or ~0.08-nits on a VA panel (~3000:1 contrast ratio).

It should be 0-nits for true black, but since a typical display is edge-lit, some light will have to pass through the panel thus raising the black level.

In case you were to decrease the brightness to 50/100, the brightness of the moon would naturally decrease to ~125-nits, as would the black depth to maintain a similar contrast ratio.

Now, on a proper HDR display, brightness works a bit differently when viewing HDR content.

If the creator of the frame above intended the Moon to have a brightness of 1000-nits with a background of 0-nits, an HDR display will try to display exactly that.

It will be able to do so thanks to its OLED panel with self-emissive pixels or a FALD (full-array local dimming) solution on an LED panel, which can individually dim areas of the screen that are supposed to be dark without greatly affecting the parts that should remain bright.

If your HDR display can’t achieve 1000-nits, it will get as bright as it can (for instance, 800-nits), and if it can achieve an even higher brightness than that, it will aim for 1000-nits anyway in order to avoid over-exposure. Just how closely your display follows the creator’s intent will depend on its HDR calibration, that is, how accurately it tracks the PQ-EOTF curve, which looks like this:

HDR Formats

There are two main formats of HDR: HDR10 and Dolby Vision.

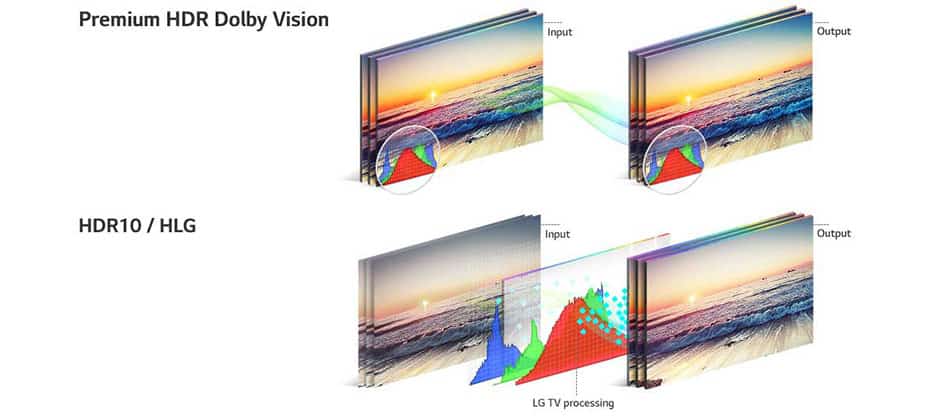

Dolby Vision offers better image quality as it provides 12-bit color, while HDR10 is limited to 10-bit. Although there aren’t any 12-bit color TVs, Dolby Vision uses down-sampling to deliver superior 10-bit color depth.

Furthermore, Dolby Vision implements its metadata on a scene-by-scene or frame-by-frame basis, also referred to as Dynamic HDR, which provides a more immersive and realistic viewing experience. A TV with Dolby Vision also supports HDR10.

HDR10, on the other hand, has static metadata, which applies to the content as a whole rather than each scene individually.

However, HDR10 is an open standard and royalty-free, which is why more content, including video games, supports it, whereas Dolby Vision is more expensive.

Dolby Vision content won’t always look better than HDR10, though – it depends on whether the video is played on a 4K Blu-ray disc or streamed online, if the movie or TV show is an older title that’s been remastered to include HDR or a new one made with HDR in mind from the start, etc.

This is explored more in our Dolby Vision vs HDR10 article.

HDR10+ is the improved version of HDR10. It brings support for dynamic metadata while still being free and limited to 10-bit color. Although there isn’t a lot of HDR10+ content available at the moment, it is slowly but steadily gaining popularity.

Further, HDR10+ Technologies is also working on an HDR10+ Gaming standard that will include performance validation of variable refresh rate, HDR calibration and low-latency tone mapping.

Is HDR Worth It?

Overall, if you can afford it, you should invest in a TV with decent HDR support, as the improvement in image quality is worth the money.

If you are interested in HDR for monitors, you can learn more about it here.